Personalisation vs privacy: a ‘stark and explicit’ trade-off

Roula Khalaf, Editor of the FT, selects her favourite stories in this weekly newsletter.

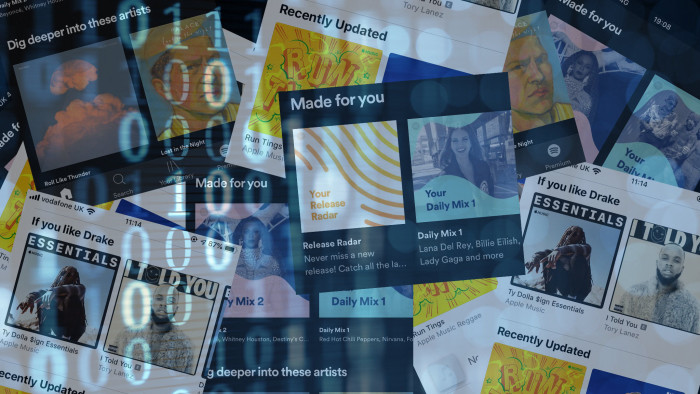

In the battle for consumer attention, internet companies have long attempted to personalise their services and offer tailored content. Netflix and Spotify have both built loyal followings on the strength of their recommendation algorithms, and businesses are adept at using data from Facebook and Google to target their advertising.

But ever-better personalisation comes at a cost to privacy. The nature of targeted advertising and recommendations means that businesses know who their customers are and what they are interested in — and much of this information is gathered with little informed consent from the consumer.

“The trade-off between privacy and personalisation is stark and explicit . . . the more data you collect, the more effectively [a technology company] can personalise a service,” says Dipayan Ghosh, a privacy expert at the Shorenstein Center at Harvard Kennedy School.

A series of high-profile exposés and regulatory moves have fuelled calls for technology companies to strike a better balance between personalisation and privacy.

In June, the UK’s data regulator reported the $200bn online advertising industry, which Google dominates, is operating illegally. The Information Commissioner’s Office found that customers’ personal data were being used without consent in real time auctions that underpin online advertising, and gave the adtech industry six months to clean up its practices. Google insists that it complies with the EU’s GDPR legislation and local laws.

However, in a sign of how pressure on the industry continues to mount, Ireland’s data regulator has opened an inquiry into Google’s online advertising exchange. Evidence submitted in September suggested that the company secretly feeds sensitive data, such as information on race and political affiliations, to advertisers. Google insists it does not serve personalised ads or send bid requests to bidders without user consent, and that it is co-operating with investigations in Ireland and the UK.

Part of the challenge is informing consumers of their rights. “Regulations, such as the GDPR, have assisted in stipulating data protection and data use to protect personally identifiable information,” says Rob Robinson, global head of security at Telstra Purple, an Australian tech services group.

“However, the next stage is to increase awareness of this choice . . . so that people are not only presented with choice of personalisation versus privacy, but they are equipped to make informed choices themselves,” he adds.

Activists’ concerns extend beyond informed consent. Privacy International, a London-based charity that has campaigned against opaque advertising practices, says intrusive data collection can be discriminatory, manipulative and comes with a growing security risk.

Industry experts say weak regulation is partly to blame. “The US, where all these companies are incorporated . . . lacks any sort of robust regulatory regime for targeted advertising,” says Mr Ghosh. “Companies built business models . . . based on three practices: the unhindered collection of personal information, the creation of opaque algorithms that create content, and the development of tremendously compelling services that come at the expense of privacy.”

Advertisers contend that personalisation efforts have a long way to go before they can become truly relevant, and that they are obliged to strike a balance between relevance and intrusion. A Gartner survey in March found that nearly 40 per cent of customers would stop doing business with companies if they found their personalisation “creepy”.

They also argue that customers are willing to share data in exchange for a better online experience. A study by SmarterHQ, a behavioural marketing company, found that 90 per cent of customers would willingly share behavioural data for an easier and cheaper shopping experience. The same report found that Amazon was the most trusted among major technology company for responsible data practices, followed by Apple.

As a result, academics and industry leaders are exploring new approaches to resolve the tension between personalisation and privacy, including fully homomorphic encryption and differential privacy.

Fully homomorphic encryption is “a new mathematical technique that would allow you to search for the Financial Times, for example, without Google knowing it was you who ran the search,” Mr Ghosh says. “Differential privacy injects noise into the data, so the company still has just enough information to provide the service without necessarily identifying the user.”

Facebook has taken small steps towards differential privacy, integrating the technique into a project to allow researchers to study the role of social media in elections. “For this project, the tool uses differential privacy to prevent those who have access to the data from determining whether a specific individual contributed to the data set,” Facebook says, adding that the tool will offer “strong privacy protection while still facilitating reliable research”.

However, experts question whether technology companies will embed these tools into their core products and services without being offered incentives.

“But regulations are looming,” says Mr Ghosh. “There will be serious inquiries, and there is going to be a robust overhaul of what these companies can do. It’s only a matter of time.”

Comments