Demis Hassabis plays to DeepMind’s strengths by using artificial intelligence for social impact

Simply sign up to the Technology sector myFT Digest -- delivered directly to your inbox.

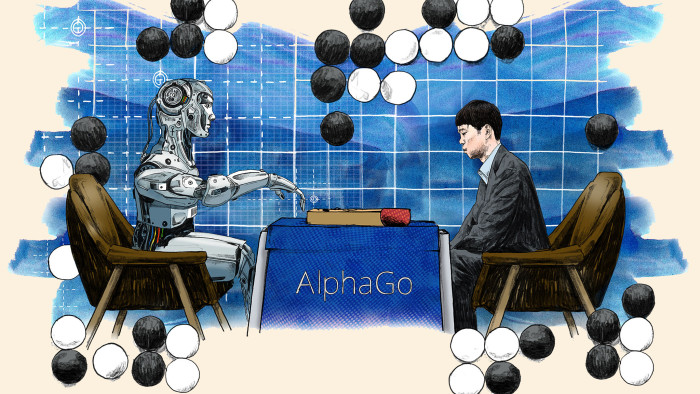

On a chilly March afternoon last year in the South Korean capital Seoul, a computer algorithm made history.

A program called AlphaGo beat the reigning human world champion at go, an ancient Chinese board game considered to be one of the most complex pastimes man has ever devised.

The game has remained an inviolably human pursuit for centuries, and one of the hardest challenges for artificial intelligence (AI) because of the vast number of possible moves — more than the number of atoms in the universe — and the need to employ creativity to win.

In Seoul’s Four Seasons hotel, AlphaGo’s victory over five games was ruthless: Lee Sedol, the 33-year-old human go grandmaster, lost 4-1. At a press conference afterwards, he said with a trace of wonder: “Today, I am speechless.”

Just two months earlier, AlphaGo had been featured on the cover of Nature, the premier peer-reviewed scientific journal, having defeated the human European go champion 5-0. That and its triumph over Lee cemented its position as a rare scientific breakthrough that came years ahead of scientists’ predictions.

“This is the first time that a computer program has defeated a human professional player in the full-sized game of go, a feat previously thought to be at least a decade away,” the team behind it wrote.

AlphaGo is the brainchild of DeepMind Technologies, a London-based AI company acquired by Google in 2014 for £400m. The AlphaGo feature was the second time in a year DeepMind had made the cover of Nature. Ten months later, last October, the team made a third appearance in the journal, making them singularly prolific among their academic peers.

With its cadre of researchers, from Bayesian mathematicians to cognitive neuroscientists, statisticians and computer scientists, DeepMind has amassed arguably the most formidable community of world-leading academics specialising in machine intelligence anywhere in the world.

“What we are trying to do is a unique cultural hybrid — the focus and energy you get from start-ups with the kind of blue-sky thinking you get from academia,” says Demis Hassabis, co-founder and chief executive. “We’ve hired 250 of the world’s best scientists, so obviously they’re here to let their creativity run riot, and we try and create an environment that’s perfect for that.”

DeepMind’s researchers have in common a clearly defined if lofty mission: to crack human intelligence and recreate it artificially.

The undertaking is one that 40-year-old Hassabis has been pondering ever since he became a professional chess master at 13 and the world number two in his age group. “Playing chess at that young age got me thinking, how does the brain come up with moves and how do you make plans?” he says. “I got my first computer when I was eight. I bought it with winnings from chess competitions. One of the first big programs I wrote when I was 11 was an AI to play Othello. It wasn’t particularly good, but it could give someone a game.”

Today, the goal is not just to create a powerful AI to play games better than a human professional, but to use that knowledge “for large-scale social impact”, says DeepMind’s other co-founder, Mustafa Suleyman, a former conflict-resolution negotiator at the UN.

The line might sound insincere if it came from an executive in Silicon Valley, where practically every start-up believes it is about to change the world. DeepMind, however, might actually be understating the sea-changes it is driving: its scientific advances are already employed in complex real-world scenarios that require pattern recognition, long-term planning and decision-making.

AlphaGo-like algorithms are, for example, being used to study protein-folding to speed up new drug discoveries at the UK’s Crick Institute; to analyse medical images to allow sharper cancer diagnoses and treatment plans at London’s University College Hospital; and to save enormous amounts of energy in power-hungry data centres at Google. In the last of these, DeepMind’s experiment resulted in energy savings of 15 per cent — or 40 per cent of cooling energy — translating to millions of dollars. The company now hopes to expand its range of clients to the UK’s National Grid and other utilities providers.

“We learn so much about the strength and weaknesses of our algorithms by testing them on large-scale, real-world, noisy and messy data sets,” says Suleyman. “It’s a pretty unique way to make progress with our toughest social problems.”

To solve seemingly intractable problems in healthcare, scientific research or energy, it is not enough just to assemble scores of scientists in a building; they have to be untethered from the mundanities of a regular job — funding, administration, short-term deadlines — and left to experiment freely and without fear.

“If you look at how Google worked five or six years ago, [its research] was very product-related and relatively short-term, and it was considered to be a strength,” Hassabis says. “[But] if you’re interested in advancing the research as fast as possible, then you need to give [scientists] the space to make the decisions based on what they think is right for research, not for whatever kind of product demand has just come in.”

DeepMind’s three appearances in quick succession in Nature, along with more than 120 papers published and presented at cutting-edge scientific conferences, are a mark of its prodigious scientific productivity. It is also an indication of its special status at Google.

“Our research team today is insulated from any short-term pushes or pulls, whether it be internally at Google or externally. We want to have a big impact on the world, but our research has to be protected,” Hassabis says. “We showed that you can make a lot of advances using this kind of culture. I think Google took notice of that and they’re shifting more towards this kind of longer-term research.”

DeepMind has six more early manuscripts that it hopes will be published by Nature, or by that other most highly regarded scientific journal, Science, within the next year. “We may publish better than most academic labs, but our aim is not to produce a Nature paper,” Hassabis says. “We concentrate on cracking very specific problems. What I tell people here is that it should be a natural side-effect of doing great science.”

Structurally, DeepMind’s researchers are organised into four main groups with titles such as “Neuroscience” or “Frontiers” (a group comprising mostly physicists and mathematicians who test the most futuristic theories in AI). Beyond these are several smaller teams with deeper specialities. Many of the project managers are former video game producers who joined from Hassabis’s previous company, Elixir Studios, an independent games developer.

Every eight weeks, scientists present what they have achieved to team leaders, including Hassabis and Shane Legg, head of research, who decide how to allocate resources to the dozens of projects. “It’s sort of a bubbling cauldron of ideas, and exploration, and testing things out, and finding out what seems to be working and why — or why not,” Legg says.

Projects that are progressing rapidly are allocated more manpower and time, while others may be closed down, all in a matter of weeks. “In academia you’d have to wait for a couple of years for a new grant cycle, but we can be very quick about switching resources,” Hassabis says.

At any point in time, the company also has two or three special forces-style units called “strike teams” that are formed temporarily to achieve a particular goal. “This is what we did with AlphaGo. Once it started showing promise in the first six months, we put a large team of 15 people with specialised skills on it, to push that to the end,” Hassabis says. “It allows us to pick exactly the right specialists to make the perfect complementary team without being beholden to traditional reporting lines. So, they’re like on secondment to that project, and then they go back to their original teams.”

This organisational culture has been a magnet for some of the world’s brightest minds. Jane Wang, a cognitive neuroscientist at DeepMind, used to be a postdoctoral researcher at Northwestern University in Chicago, and says that she was attracted to DeepMind’s clear, social mission. “I have interviewed at other industry labs, but DeepMind is different in that there isn’t pressure to patent or come up with products — there is no issue with the bottom line. The mission here is about being curious,” she says.

For Matt Botvinick, neuroscience team lead, joining DeepMind was not just a career choice but a lifestyle change too. The former professor who led Princeton University’s Neuroscience Institute continues to live in the US, where his wife is a practising physician, and commutes to DeepMind’s labs in London every other week.

“At Princeton, I was surrounded by people I considered utterly brilliant and had no interest in working in an environment any less focused on primary scientific questions,” he says. “But I couldn’t resist the opportunity to come here because there is something qualitatively new going on, both with the scale and the spirit of ideas.”

What sets DeepMind apart from academic labs, he says, is its culture of cross-disciplinary collaboration, reflected in the company’s hiring of experts, who can cut across different domains from psychology to deep learning, physics or computer programming.

“In a lot of research institutions, things can become siloed. Two neighbouring labs could be working on similar topics but never exchange and pool information,” Botvinick says. “Unlike any place I’ve ever experienced before, all conversations are enhanced rather than undermined by differences in background.”

Illustration by Scott Chambers

Comments